Query Fanout Estimator

Last updated: December 11, 2025

The Query Fanout Estimator agent predicts how Answer Engines (like ChatGPT, Claude, Gemini, or Perplexity) are likely to expand a single user prompt into multiple web search queries. These expanded searches—called query fanouts—are what actually drive retrieval and citations in AI-generated answers.

This agent helps you understand the search layer behind the answer: which queries an Answer Engine might run, what facets of intent it will explore, and where your content needs coverage to be cited more often.

See this document for additional instructions on adding this node to a workflow, and this document for a full list of available nodes.

When to use this agent

Use the Query Fanout Estimator when you want to:

See how a specific user prompt is likely to be decomposed into multiple web searches

Identify the high-intent queries that matter most for a given prompt

Plan content that covers the full fanout space behind important prompts

Feed likely fanout queries into Answer Engine Insights, content briefs, or keyword clustering workflows

Model how Answer Engines translate prompts into retrieval behavior for AEO strategy

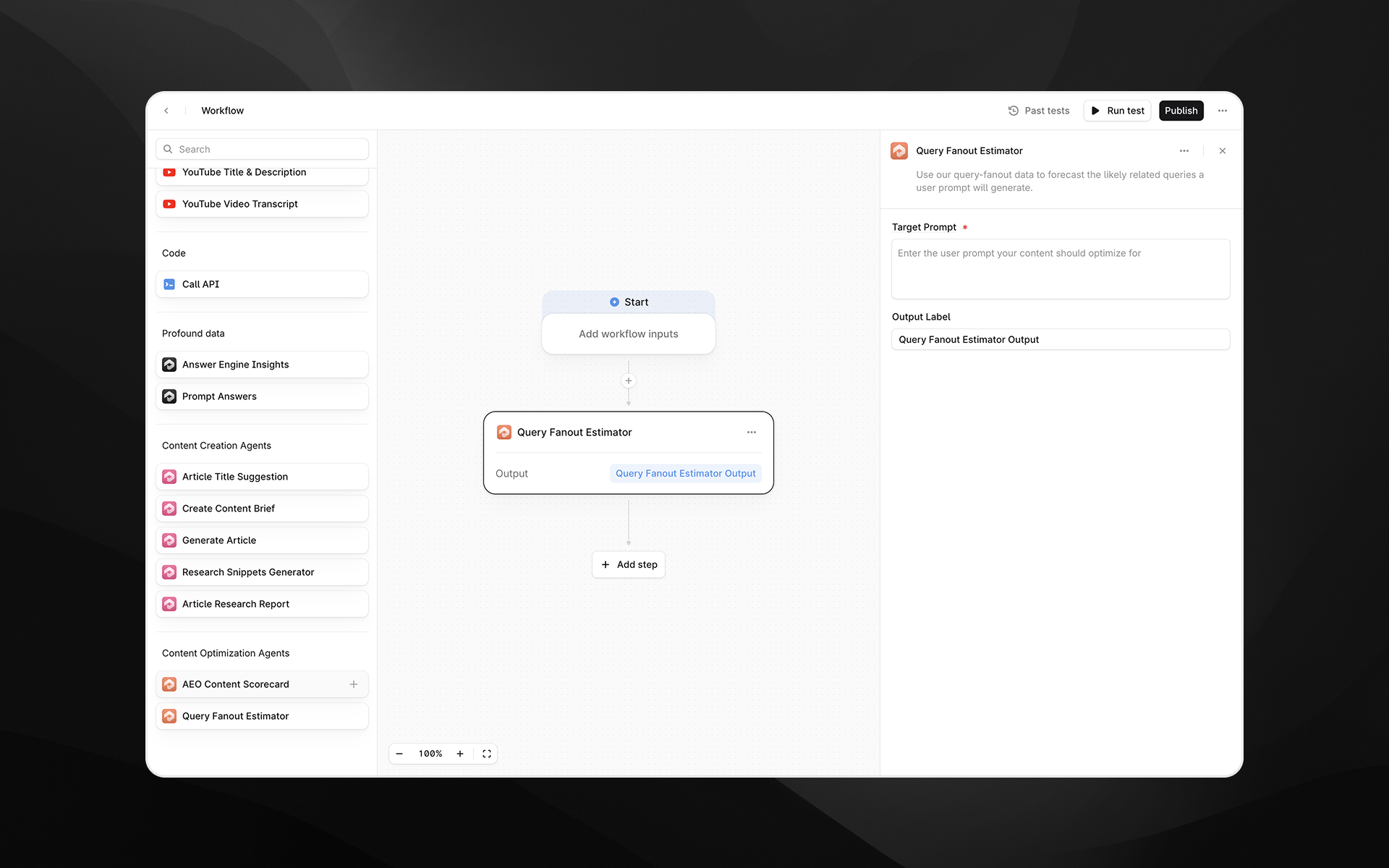

Agent inputs

Target Prompt (required)

The user prompt that your content should optimize for.

Examples:

“How do keyword hierarchies work in AI search?”

“Best tools for monitoring AI citations for my brand”

“How does query fan-out impact SEO strategy?”

The agent will estimate which web search queries an Answer Engine is likely to run when answering this prompt.

Output Label (required)

A descriptive name for the agent’s output.

Examples:

query_fanout_estimatepredicted_fanout_queries

You will use this label to reference the fanout data in downstream steps (e.g., content briefs, research steps, reports).

How the agent works behind the scenes

Although this agent appears as a single node, it runs a two-step pipeline:

1. Retrieve real query fanout examples

Profound maintains a knowledge base of historical query fanouts: real pairs of

User prompt →

Web search queries that Answer Engines actually ran behind the scenes (the “fanout” set).

Given your Target Prompt, the agent:

Performs a semantic search over this fanout dataset

Retrieves the most similar prompt–fanout examples

Passes those examples forward as in-context demonstrations

These examples are not restricted to the same topic; they illustrate how Answer Engines in general expand prompts into multiple searches.

2. Estimate fanout for your prompt using an LLM

Next, the agent uses a model configured specifically to “think like” an Answer Engine’s retrieval layer:

It receives:

Your Target Prompt

A set of historical prompt + fanout examples from Profound’s knowledge base

It is instructed to:

Infer how an Answer Engine would break your prompt into multiple web search queries

Generate realistic, high-intent, semantically diverse search queries

Focus on sub-queries that would retrieve relevant sources (not just phrasing variants)

Capture different facets of the original intent (definitions, comparisons, how-to steps, evaluation, etc.) (Profound)

The model returns a list of predicted web search queries—your estimated query fanout.

Output

The agent returns a structured text output containing likely search queries that Answer Engines would fan out from your prompt. For example:

what is query fan-out in ai search, how do llms expand user prompts into multiple web searches, impact of query fan-out on brand visibility, examples of query fan-out in google ai overviews,how to optimize content for query fan-outYou can parse this list into an array in downstream steps or feed it directly to other agents.

Example workflow: Fanout-informed content planning

Goal: Build a content plan that covers the full set of queries an Answer Engine might run for a critical prompt.

Query Fanout Estimator

Input: Target Prompt (e.g., “How do AI-generated answers choose citations?”)

Output: Predicted fanout queries

Research & Insights

For each fanout query:

Use Perplexity Search or Web Page Scrape to gather citations

Use Research Snippets Generator to extract facts and quotes

Create Content Brief

Feed the fanout queries + research into the Create Content Brief agent

Ensure the brief mandates coverage for each high-intent sub-query

Generate Article

Use the brief to generate an article that explicitly addresses the main prompt and its fanouts

AEO Content Scorecard

Score the final article, checking whether it covers enough of the fanout space implied by the prompt

This workflow ensures your content isn’t just optimized for a single phrasing—but for the full set of searches Answer Engines are likely to run behind that prompt.

Best practices

Use natural-language prompts that mirror real user questions; the fanout will be more realistic.

Store the fanout output in a reusable label (e.g., query_fanout_estimate) so it can power multiple downstream steps.

Combine this agent with Answer Engine Insights to see how often your site appears across the predicted fanout queries.

Use fanout results to design FAQs, headings, and section structure that map directly to the likely sub-queries Answer Engines care about.