AEO Content Scorecard

Last updated: December 11, 2025

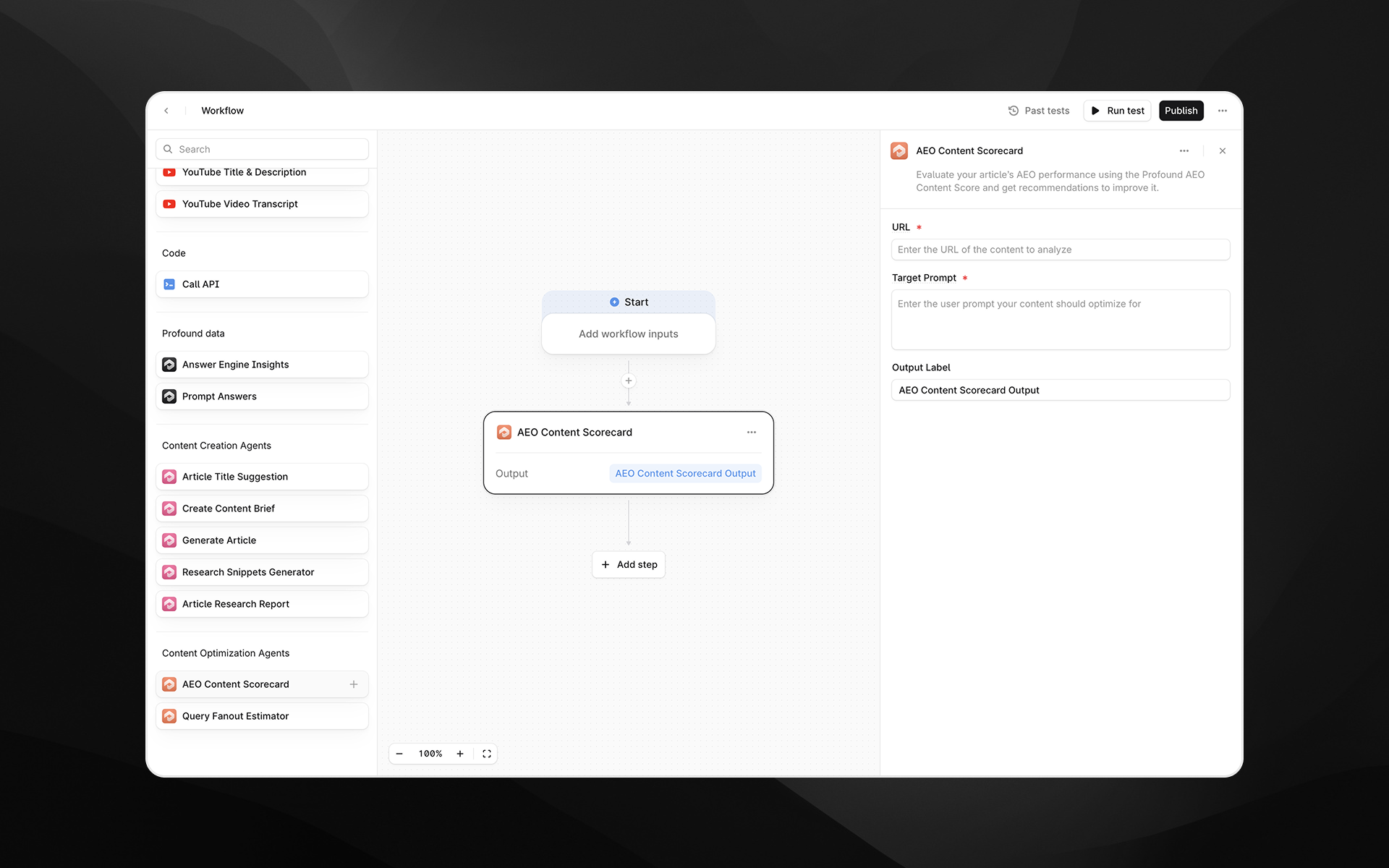

The AEO Content Scorecard agent evaluates how well a given article performs for Answer Engine Optimization (AEO). It analyzes the URL you provide, retrieves and inspects the content, and produces a structured scorecard with category-level scoring, weighted factors, and specific, actionable recommendations.

This agent uses the same scoring logic that powers Profound’s Content Optimization feature, ensuring consistency between your workflow outputs and the optimization experience in the Profound platform.

Use this agent when you want to diagnose how well an article aligns with the way AI systems evaluate relevance, clarity, structure, and answerability—and when you want to programmatically trigger optimization workflows based on these results.

See this document for additional instructions on adding this node to a workflow, and this document for a full list of available nodes.

When to use this agent

Use the AEO Content Scorecard agent to:

Audit existing content for AEO readiness

Prioritize content refresh opportunities

Trigger automated improvement workflows

Benchmark your content against top-ranking competitors

Provide structured optimization recommendations to writers or LLMs

Standardize content scoring across your organization

This agent is highly effective in both human-driven editorial processes and fully automated content optimization pipelines.

Agent inputs

URL (required)

Enter the URL of the article you want to evaluate.

This can be:

A published content page

A staging URL

A temporary hosting URL

Any accessible webpage containing the article text

The agent retrieves the content and applies a full analysis across readability, structure, answerability, machine readability, and more.

Target Prompt (optional)

Enter the user prompt or question your content should be optimized for.

Example:

“How do keyword hierarchies work in AI search?”

“What is predictive maintenance in manufacturing?”

This helps evaluate whether the article directly matches user intent as seen in AI-generated answers.

Output Label (required)

Assign a descriptive label to access the scorecard output in downstream steps.

Examples:

aeo_scorecardcontent_scorescorecard_output

How the agent works behind the scenes

The AEO Content Scorecard agent runs a multi-step evaluation pipeline:

1. Content retrieval

The agent fetches the webpage content from the provided URL and prepares it for analysis.

2. AEO-focused content parsing

The underlying models identify:

Text structure

Header hierarchy

Answerability signals

Entity coverage

URL patterns

Schema presence

Internal linking

Machine readability cues

3. Scoring across AEO categories

The agent produces a weighted score across categories, commonly including:

Readability

Content Freshness

Content Structure

Answerability Signals

Machine Readability

Information Density

Each category is scored and flagged (e.g., green, yellow, red).

4. Top recommendations

The agent identifies the highest-impact improvements based on:

AEO principles

Competitor benchmarks

Structural inconsistencies

Opportunities to simplify, clarify, or enhance the content

Recommendations include:

Before/after examples

URL improvements

Schema suggestions

Title enhancements

Subheading updates

Opportunities to increase topical relevance

5. Final score assembly

The agent compiles a structured scorecard containing:

Final Score

Target Score Range (based on top competitor performance)

Detailed category breakdown

Actionable recommendations

Output

The resulting output is a structured report similar to the following example:

Example Output:

AEO Content Scorecard

URL: https://www.tryprofound.com/blog/introducing-keyword-hierarchies

Final Score: 72/100

Target Zone (Top Competitors): 62–72

Category Breakdown

Top Recommendations

Enhance Subheading Relevance

Improve clarity by explicitly mentioning “Keyword Hierarchies in AI Conversations.”

Before: “The technical innovation”

After: “How Keyword Hierarchies Enhance AI Conversations”Simplify the URL Structure

Shorten the URL to make it more topical and easier for AI systems to parse.Implement FAQ Schema Markup

Add FAQ entries such as “What are keyword hierarchies?” to better support answer engines.Add Superlatives to the Title

Example improvement:

Before: “Introducing Keyword Hierarchies”

After: “Discover the Most Effective Keyword Hierarchies for AI Conversations”

Example Workflow: AEO Content Refresh

Here is how you might use this agent inside a real optimization pipeline:

Goal: Automatically improve existing articles and republish them.

Workflow Steps:

AEO Content Scorecard

Input the article URL

Retrieve a full AEO scorecard and improvement recommendations

Prompt LLM: Generate Improved Article

Provide the scorecard and instruct the model to apply the recommended improvements

Rewrite the article with updated structure, headings, clarity, and answerability

Maintain original brand voice

Prompt LLM: Validate Against Scorecard Criteria

Ensure the updated article meets or exceeds recommended category scores

Publish or Update Step

Use Call API or CMS publishing logic to update the article on your site

Example LLM prompt:

Here is the AEO Content Scorecard for this article. Apply all recommendations, rewriting the content where necessary. Produce an improved article that would score at least 10 points higher while maintaining factual accuracy and brand voice.

{{aeo_scorecard}}This workflow allows teams to create scalable, repeatable content optimization systems powered by Profound.

Best Practices

Use the Target Prompt field whenever the article exists to answer a specific question.

Pair this agent with Generate Article for iterative optimization loops.

Use clear output labels when chaining scorecards into rewrite steps.

Run this agent periodically in recurring workflows to detect content decay.