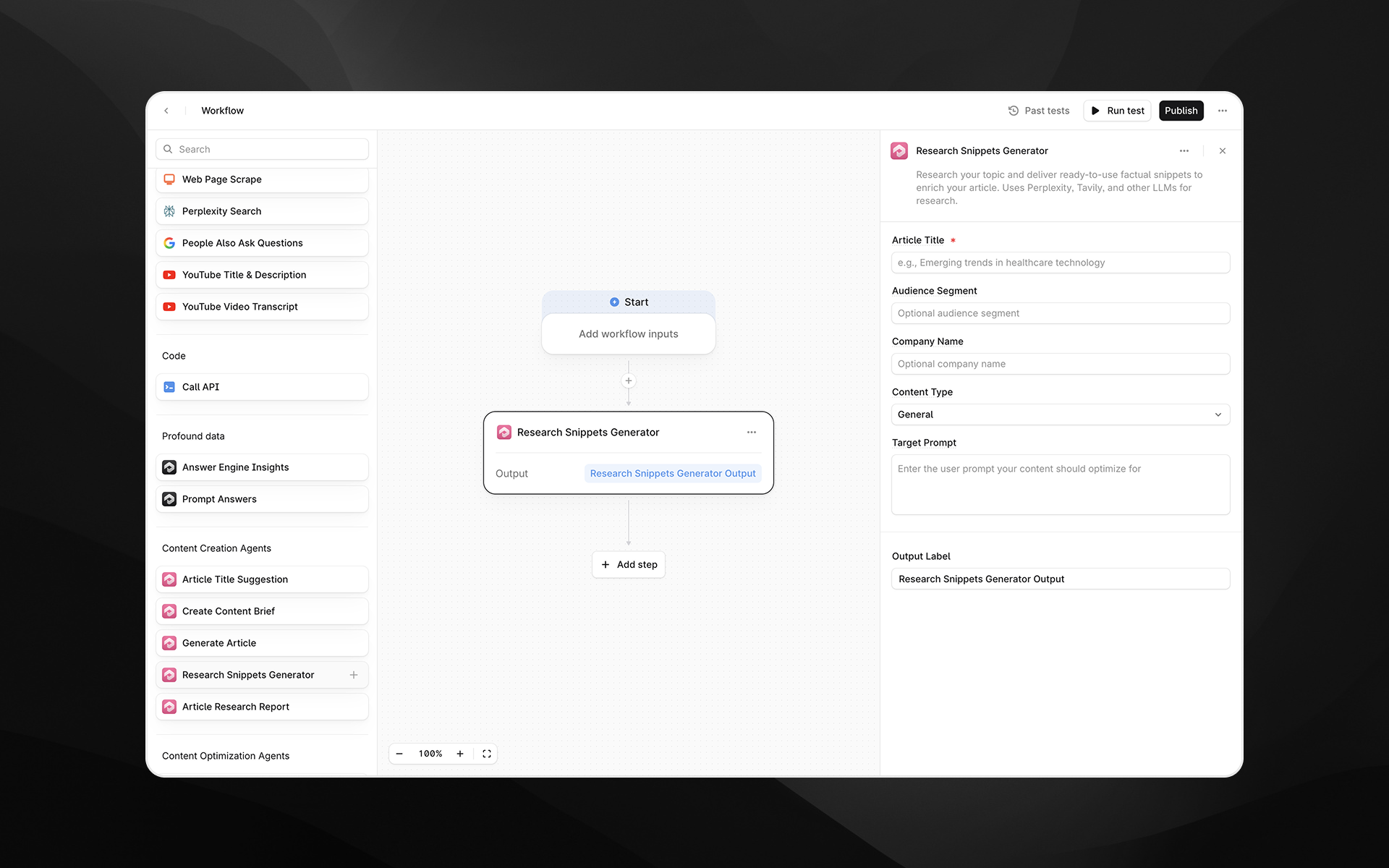

Research Snippets Generator

Last updated: December 11, 2025

The Research Snippets Generator agent produces a curated set of factual, citation-backed research snippets that you can use to enrich articles, briefs, and other content assets. These snippets are extracted directly from high-authority sources using structured research workflows—dramatically reducing hallucinations and ensuring accuracy.

Each snippet is short, quotable, and includes its source URL, making it ready for direct insertion into content or for use in downstream analysis or generation steps.

Unlike broad summarization agents, this one focuses on evidence, not narrative: facts, statistics, quotes, and verifiable claims.

See this document for additional instructions on adding this node to a workflow, and this document for a full list of available nodes.

When to use this agent

Use the Research Snippets Generator when you need:

Accurate facts and citations to support an article

Quickly sourced statistics, definitions, quotes, or authoritative statements

Verifiable evidence for AEO-friendly content

Study materials for briefs, outlines, or LLM-driven content creation

A clean set of structured research snippets for editorial workflows

This agent is ideal for writers, strategists, and automated content systems that require reliability and transparency.

Agent inputs

Article Title (required)

The topic or title that the agent should research.

Examples:

“Emerging trends in healthcare technology”

“How generative AI improves supply chain forecasting”

“What is AI visibility and why does it matter?”

This title directs the research tools and extraction models.

Audience Segment (optional)

Adds context so the agent can select sources and snippets that better match the intended reader.

Examples:

“Enterprise CIOs”

“Healthcare operations managers”

“B2B SaaS marketers”

Company Name (optional)

Used for contextual alignment when sourcing or selecting certain snippet styles (e.g., B2B tone vs. consumer tone).

Content Type (optional)

Defaults to General, but may support variations depending on your workspace configuration.

This setting helps the agent adjust how granular or technical the snippets may be.

Target Prompt (optional)

If you want the research snippets to specifically support a user prompt used in AI search or AEO workflows, enter it here.

Example:

“How does AI reduce logistics costs?”

Output Label (required)

Provide a variable name for the resulting JSON structure containing the snippets.

Examples:

research_snippets

evidence_blocks

snippet_list

How the agent works behind the scenes

Although this agent appears as a single node, it performs a full research pipeline internally. The underlying workflow includes:

1. Topic-focused research

The agent queries multiple research tools and models—including systems like Perplexity and Tavily—to gather:

High-authority webpages

Government or academic reports

Industry data

News articles

Trusted organizational publications

This process surfaces a large pool of raw research material.

2. Aggregation and de-duplication

All retrieved research is consolidated into a structured dataset.

The workflow filters:

Low-authority sources

Redundant information

Irrelevant tangents

Non-citable content

Only reliable materials move forward.

3. Evidence extraction

An LLM configured as an AEO Snippet Extractor processes the structured research and identifies extractable facts.

Snippets must meet strict criteria:

At least 20 snippets must be produced (goal: 20–40)

Each snippet must be factually accurate

Each snippet must be directly supported by a cited source

Snippets must be under 160 characters

Avoid hallucinations by extracting text, not inventing it

Prefer .gov, .edu, associations, reports, or major media

Verbatim text is allowed when accuracy is critical

Every snippet includes a URL

This is designed for high trustworthiness, especially in enterprise or regulated contexts.

4. JSON formatting

The agent packages the results into a clean JSON structure:

This structure is ideal for downstream workflows such as:

Article generation

Snippet clustering

Fact-checking

Scorecards

Human editorial review

Output

You receive a structured JSON object containing 20–40 evidence snippets, each with a corresponding URL.

These snippets can be used to:

Enrich articles

Support claims in content briefs

Provide trustworthy evidence for LLM prompts

Generate research summaries

Accelerate editorial workflows

Power automated article creation pipelines

Example usage

1. Fact-enriched article generation

Generate a title

Run Research Snippets Generator

Feed snippets + brief into Generate Article

Produce a deeply factual article grounded in authoritative sources

2. Human editorial workflows

Editors can pull snippets directly into outlines, paragraphs, or fact boxes.

3. Automated content QA

You can use snippets to validate whether article content aligns with cited facts.

Best practices

Use a clear and specific Article Title to ensure relevant research.

Pair with Create Content Brief for the strongest content strategy alignment.

Include a Target Prompt if writing for AI-answer funnels.

Snippets work extremely well as grounding data in Prompt LLM steps.

Use output labels that make downstream references obvious (e.g., research.snippets).